Cookies Notice

This site uses cookies to deliver services and to analyze traffic.

📣 Introducing AI Threat Modeling: Preventing Risks Before Code Exists

In May 2021, the Biden administration signed an Executive Order to improve cybersecurity of US Government software, including third-party software from vendors selling to any US Government agency. Since the Executive Order, a memorandum was released by the Office of Management and Budget (OMB) detailing requirements for federal agencies to follow the NIST Secure Software Development Framework (SSDF), SP 800-218 and the NIST Software Supply Chain Security Guidance for any software sold to any US Government entity.

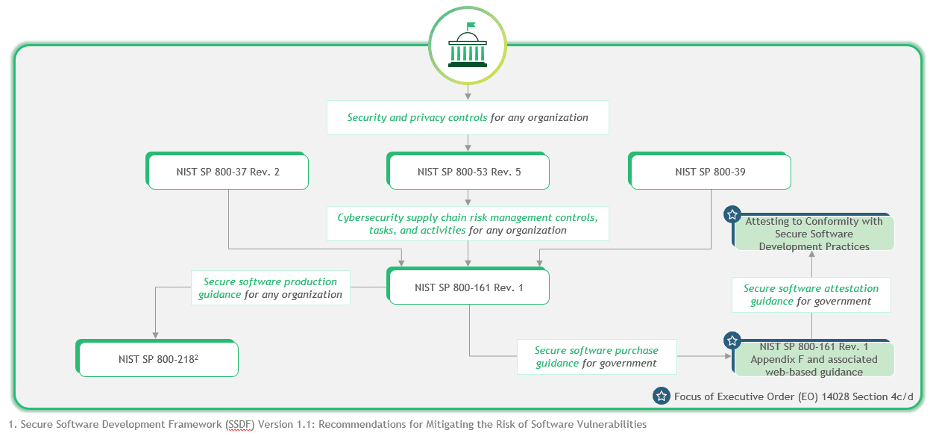

Relationship map between SSDF (SP 800-218) and Software Supply Chain Security Guidance (EO 14028, Section 4(d)), Source: Executive Order 14028, Improving the Nation’s Cybersecurity

Apiiro contributed to SP 800-218 and helped define security best practices listed in the document, which is meant to improve security of any development environment even in the private sector. The Executive Order focuses on any developer or agency that works with the US Government, but using these best practices and guidelines helps any corporation. The SP 800-218 document contains 20 best practices for the Secure Software Development Framework, and here is a summary to help you follow upon the key ones.

Everyone in the software development lifecycle from the top down should be on board with the way security is handled. Good security practices are a collaborative effort so that nothing falls through the cracks. Policies should be defined and available to anyone within the organization at one location so that nothing is duplicated. Documents that should be easily available include security requirements for internal sources (e.g., the organization’s policies, business objectives, and risk management strategy) and external sources (e.g., applicable laws and regulations).

Roles and responsibilities should be defined so that everyone knows what they must do to secure the software development lifecycle (SDLC). For example, a developer is responsible for examining alerts for code vulnerabilities to remediate them prior to deploying compiled software, and operations people are responsible for ensuring the security of cloud configurations and deploying infrastructure to support it.

In the design of your security policies, use the NIST framework for a safe SDLC from code creation to deployment to post-deployment monitoring. The NIST framework tightens access controls and stops common threats from affecting software.

Bottom line – Clearly define security requirements, roles and responsibilities and share the information across the organization in a centralized manner.

Humans make errors, so you can improve the security of your SDLC if you automate repeatable steps that eliminate human intervention. Automation reduces human effort and improves the accuracy, reproducibility, usability, and comprehensiveness of security practices throughout the SDLC, as well as provides a way to document and demonstrate the use of these practices. Tools may be used at different levels of the organization, such as organization-wide or project-specific, and may address a particular part of the SDLC, like a build pipeline.

For example, scanning software for vulnerabilities during the development process can be automated to find common coding errors. A Static Application Security Tool (SAST) executes while developers create code, so they get feedback before code is deployed to production. Using a SAST, developers have the opportunity to remediate bugs before they go to production, and the process is entirely automated so that no human errors are involved.

DevOps relies heavily on automation, and using infrastructure deployment tools reduces risk of misconfigurations. Operations people can create scripts for infrastructure configurations so that repeatable cloud deployments are not at risk of incorrect security settings, access controls, or resource provisioning.

Bottom line – Invest in security automation across your SDLC, aim for early detection (“Shift Left” approach) as much as you can.

It should go without saying that the software development environment is a target for threats, so it’s important to keep it secure from external and internal attackers. Obtaining access to the code environment gives attackers several options to steal data or intellectual property. Every environment in the development lifecycle must be secured with the right access controls including the staging, testing, and deployment environments.

Unauthorized changes to code can take months to detect. With the right sophisticated attack, code changes could be used to spread malware to customers, steal customer data, or provide remote access to a development machine. With remote access, an attacker could steal proprietary secrets or compromise the environment to steal data.

In addition to monitoring the development environment, strict monitoring should be done to the deployment and change control environments. Tampering with these environments could hide any code compromises and allow an attacker to deploy changes to public repositories where customers would be affected. Supply chain attacks affect your local software and any software you offer to customers, leaving potentially thousands of organizations vulnerable to threats.

Change control software should be set up to archive deprecated and old versions for auditing purposes. Most third-party change control and versioning software archives code for you, but you must take backups to ensure that you can review it in case of an investigation after a security incident.

Bottom line – Treat your SDLC as you would for any other critical section of your network environment and put security in place to detect and remediate threats.

Every organization must follow at least one regulatory standard. If you haven’t had your environment audited for security standard violations, you could be at risk of a compromise, litigation, and hefty fines from violations. Compliance regulations have requirements specifically for data protection and security, and although they might require your organization to invest in additional infrastructure, they can be helpful for overall cybersecurity posture.

Auditing your SDLC environment for compliance usually requires a review from a third party, but DevOps teams can also work with software vendors that ensure compliance using their tools. Non-compliant tools can also put your organization at risk if they don’t have the right security in place. For example, if the tools that you use expose financial or healthcare data, the entire organization could face fines and litigation for violating HIPAA and PCI-DSS regulations.

Bottom line – Review compliance regulations and ensure that you have the right infrastructure in place to avoid hefty fines for violations.

Whether your software runs in a user’s browser, on a server, or on a workstation, it should be penetration tested. A Dynamic Application Security Tool (DAST) is automated software that scans production software for common vulnerabilities, but a true penetration test requires human intervention. The best SDLC security combines automated tools with a manual review to ensure that obscure sophisticated vulnerabilities are identified.

Penetration testing is a sophisticated skill, so it often requires a third party, similar to audits for compliance. A penetration team provides you with a report so that you can understand vulnerabilities, review a proof of concept, and get remediation advice on what can be done to eradicate risks from your environment. After you remediate vulnerabilities from the report, the penetration team retests to ensure that you have completely fixed any issues.

Every version update or significant code changes should be penetration tested again. If you work with a third party, they will work with you to test code frequently and perform new tests on software updates, patches, new applications, and new versions. Penetration teams find common vulnerabilities in the same way as an attacker, so you can remediate issues before unknown vulnerabilities become a vector for a data breach.

Bottom line – Improve security by having your software penetration tested for known vulnerabilities to avoid a data breach from scripted exploits.

Developer code can be a source for a data breach, but misconfigurations are also a major security issue for organizations. A simple cloud misconfiguration can leave terabytes of data available to the open internet. Often, the cloud gets a dubious reputation for being insecure due to common misconfiguration vulnerabilities, but the cloud itself is secure. The security of the cloud, however, depends on administrators configuring security access controls correctly.

It can be difficult to keep track of a DevOps environment with constantly changing infrastructure, and configurations can be automated along with several other development deployments. A good review of your infrastructure configurations ensures that you don’t have any vulnerabilities due to mistakes in data access permissions and security controls.

Bottom line – Software isn’t the only target for attackers. Review environment configurations to ensure that they block unauthorized access to internal resources.

Research shows that it takes an organization months to detect a compromise and even longer to contain and eradicate it. This large window of opportunity gives attackers plenty of time to install backdoors, take control of various workstations and network infrastructure, and exfiltrate data to an offsite server. After a data breach, incident response should be quick. The longer an attacker has on the network, the more data can be stolen and the more damage can be done.

Organizations and DevOps teams can reduce an attacker’s window of opportunity in several ways. The first and primary defense against a long-term compromise is using the right monitoring tools. You might need several tools to fully monitor the environment, but any tool should watch for common vulnerabilities such as Cross-Site Scripting (XSS), credentials and sensitive data stored in source code, misconfigurations on access controls, session theft, directory browsing, brute forcing, account takeovers, and the numerous other exploits available to an attacker.

Another way to limit an attacker’s window of opportunity is to rotate private keys and require users to change their password every month or two. After credential theft, an attacker uses stolen usernames and passwords to attempt authentication on your network. Should an attacker successfully authenticate, their window of opportunity is much more limited than if the attacker was in an environment where credentials remain the same for months.

Every new network resource adds a level of complexity and new security risks. You can’t avoid adding infrastructure, but you can limit security risks by following best practices for access controls and permissions. Users should have access to resources necessary to perform their work function, but the environment should never have overly permissive access.

Don’t forget that human error increases risks of a compromise. Employees, third-party vendors, and contractors all contribute to your attack surface. Everyone with access to corporate data should be trained to identify phishing, social engineering, and malware downloads. Better training with documented security policies helps to reduce risk of human error, which is the biggest risk to your corporate data and network security.

Bottom line – Limit the window of opportunity for exploits by reviewing current access controls and rotating keys in case of a compromise.

Part of a good incident response plan is using lessons learned as a driver for better security. After a compromise, the priority is to contain and eradicate it, but investigations into the source of the exploit should reveal where administrators went wrong. Using lessons learned, security infrastructure and policies can be improved to reduce the frequency of vulnerabilities in the future.

The final NIST guideline is to take necessary steps to future-proof data security. Documentation and investigations into a security incident builds on cybersecurity and provides insights on what changes should be made to better protect corporate data. Your cybersecurity policies should be reviewed annually to ensure that they cover the latest threats.

Bottom line – Learn from mistakes and take the appropriate steps to avoid the same ones in the future.

With these eight points in mind, you must find your own strategies to build cybersecurity around following NIST guidelines. Every guideline provides a piece of the puzzle to make your environment safer from risks.

Learn from mistakes to build a more secure environment in the future to avoid the same pitfalls.

Implementing NIST’s Secure Software Development Framework (SSDF) might seem complex initially, but the right tools can help alleviate much of the overhead and frustrations your DevOps and DevSecOps teams might experience. The framework does not dictate what software you must use, but you must use security applications that produce well-structured security infrastructure that protects data privacy and confidentiality of corporate trade secrets.

By following the NIST SSDF, any company creating software will reduce the number of vulnerabilities, reduce the impact of probability of threat exploits, and address the root cause of vulnerabilities to prevent them in the future.

For an even deeper insight into the best practices to follow, check out the following video workshop from NIST that brought together several industry experts to share their insights on secure software development tools and practices as they relate to software supply chain security Executive Order 14028:

Apiiro is a strong supporter and contributor to global standardization efforts and initiatives – including NIST SSDF. The company has been invoked multiple times in the above mentioned Executive Order, which is as a backbone of modern application security framework for agile and dynamic cloud-native application development environments.

We appreciate the collaboration and fruitful brainstorming sessions that have gone into drafting the NIST SSDF paper, especially around contextual risk-based code changes, anomalous behavior detection and application security marshaling within organizations. The shared knowledge and experience is based on extensive learnings from our practitioners, customers and prospects.

https://www.nist.gov/video/workshop-executive-order-14028-guidelines-enhancing-software-supply-chain-security-part-1