Cookies Notice

This site uses cookies to deliver services and to analyze traffic.

📣 Introducing AI Threat Modeling: Preventing Risks Before Code Exists

Threat Analysis and Prevention That Understand Your Software Architecture – From Design, Code, and Runtime.

In the modern software development lifecycle (SDLC), the distance between a concept and its deployment has collapsed. Driven by AI-assisted coding and high-velocity continuous delivery, code is now generated and shipped faster than human-led security processes can ever hope to review. This shift accelerates the evolution of application security from Detection to Autonomous Prevention — a move from reactive scanning to proactive, secure-by-design development from the first keystroke.

A few months ago we took a major step toward this vision with the launch of the Apiiro Guardian Agent. In building this agent, our core thesis was simple: AI needs a map. To provide meaningful actionable security guidance, the AI coding agent must be grounded in the context of the application’s unique data flows, architecture, and runtime environments as well as in the organization’s policies, processes and business. Since then, Guardian Agent “secure prompt” capability has helped to create thousands of code commits with no vulnerabilities or compliance violations.

However, for fulfilling the autonomous prevention promise, looking at the code implementation alone is not sufficient. The most critical security failures are often not implementation bugs, but fundamental design flaws. Traditional threat modeling — the process of identifying these threats and defining the appropriate countermeasures to avoid them — is one of the last remaining manual bottlenecks in the SDLC. It relies on manual processes, static diagrams, intermittent workshops, stale tasks, and expert-level security knowledge that cannot scale with AI-driven development.

Today, we are excited to announce a significant expansion of the Guardian Agent’s capabilities: AI Threat Modeling. By leveraging the Apiiro Data Fabric, Guardian Agent can now automatically generate contextualized, dynamic and actionable threat models directly from product specifications and implementation tickets. This capability ensures that security considerations are foundational to every project, preventing vulnerable and non-compliant code from ever being written.

Threat modeling has always been a high-value exercise. Identifying a security risk during the design phase is much cheaper and less risky than fixing it after it has reached production. Yet, despite its importance, the traditional approach to threat modeling is fundamentally broken in the modern enterprise:

The Apiiro Guardian Agent was designed to address these challenges by operating as a “Principal Security Engineer” embedded throughout the SDLC. To do this effectively, the Agent relies on the Apiiro Data Fabric, a unified foundation consisting of our Software Graph™ and Risk Graph™.

This foundation provides the autonomous reasoning needed for AI Threat Modeling:

By adding AI Threat Modeling to its capabilities set, Guardian Agent moves the starting line of security even further “left” – from the first commit to the first keystroke of a product specification.

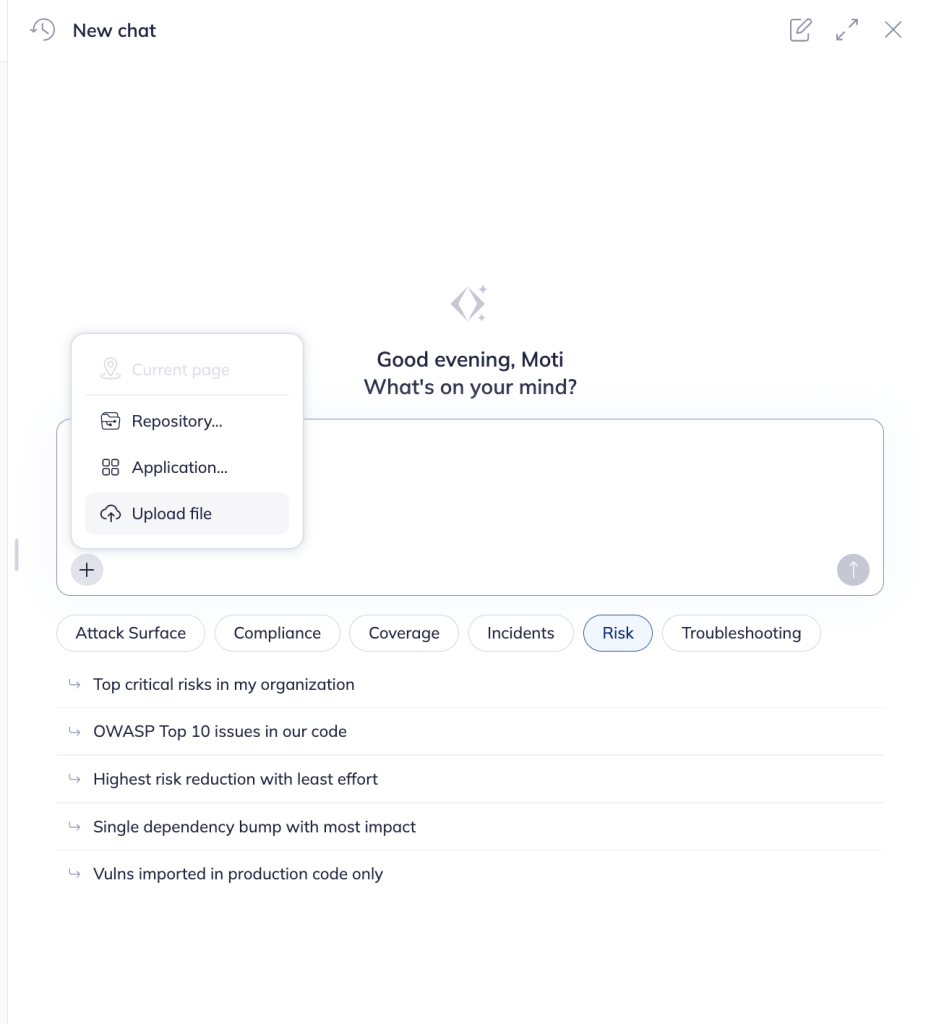

AppSec engineers or developers can initiate an on demand threat model for any new or existing design/feature by providing Guardian Agent with design documents, architectural diagrams, or even images of whiteboards. This can be done via the Apiiro portal or through the Guardian Agent chat.

In addition to manually initiating, organizations can guide the Guardian Agent to autonomously initiate threat models without an explicit human trigger:

New feature implementation rarely starts from an empty state. In the vast majority of cases, features are added to an existing application codebase, with its own unique characteristics and controls. Unlike standalone threat modeling tools that start with a blank canvas, AI Threat Modeling uses the information already present in your code. It analyzes the intent of the new feature design and cross-references it with the existing state of your software estate.

It uses the Apiiro Data Fabric for contextual grounding to understand, for example:

There is no one way of conducting a threat model. Each organization and AppSec team has their own favored framework for guiding the threat model analysis. Therefore the Guardian threat modeling is grounded on a comprehensive deterministic threat library encompassing and cross-referencing the following multiple industry-standards:

| Framework/ Standard | |

|---|---|

| STRIDE | Classifies each threat by type (Spoofing, Tampering, Repudiation, Info Disclosure, DoS, Elevation of Privilege) |

| CIA Triad | Flags which security properties are impacted – Confidentiality, Integrity, Availability |

| CAPEC | Maps to the attack pattern used to exploit the weakness (e.g. CAPEC-125 Flooding) |

| CWE | Links to the specific software weakness (e.g. CWE-400 Resource Exhaustion |

| OWASP Top 10 | Ties to the relevant OWASP risk category (e.g. A04:2021 Insecure Design) |

| MITRE ATT&CK | References the adversary technique (e.g. T1499 Endpoint DoS) |

| OWASP ASVS | Points to the verification requirement for testing (e.g. V5.1.1) |

| NIST | Maps to the applicable NIST security control (e.g. AC-3 Access Enforcement) |

| ISO 27001 | Links to the relevant ISO control clauses (e.g. A.12.1.3, A.13.1.1) |

The Guardian Agent extracts all the data needed for the threat model from the design or from the code itself. No tedious questionnaire or generic assumptions on the implementation architecture. It then performs a comprehensive analysis of the proposed design, identifying potential threats across multiple layers including:

While identifying potential threats is an important first step, the real complexity – and where Apiiro’s threat modelling unique value resides – lies in the ‘last mile’ of accuracy and actionability. Thus, for every identified threat, the Agent provides:

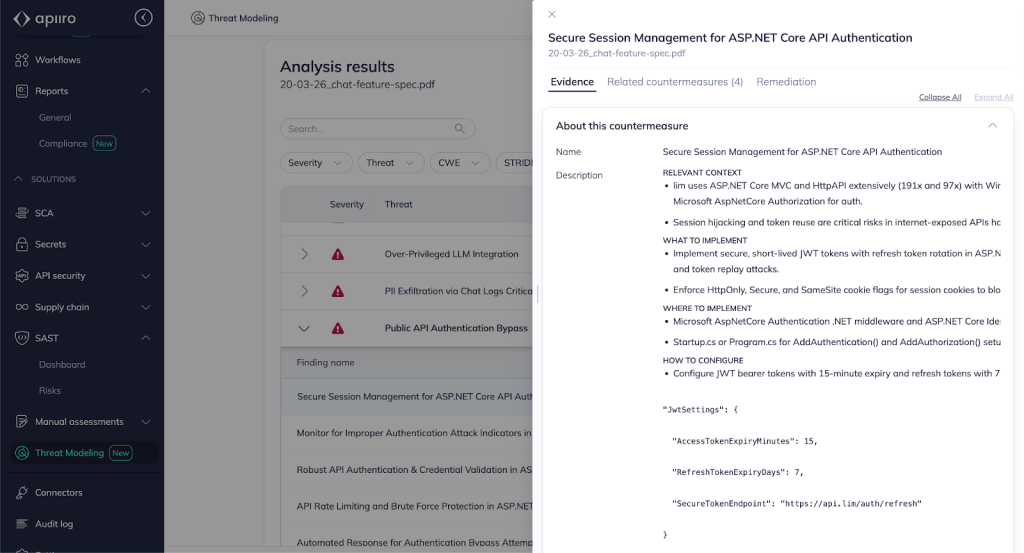

With the Apiiro Data Fabric as critical context, the Agent will explain and provide design and code evidence for any threat selected: What is the potential vulnerability, attack path, exploitation method and technical consequences of exploitation. Moreover, instead of generic advice, the Agent will use this context to provide countermeasures implementation actionable guidance tailored to your specific architecture. It will provide all the relevant codebase context for the countermeasure implementation: What, where and how to implement, evidence why it will work and guidance on how to verify.

To demonstrate the power of this capability, let’s look at an imaginary but common scenario:

A platform team is building a new feature: an AI-powered chat assistant embedded in their product. The design doc describes a Claude integration and a context enrichment layer that fetches and feeds internal data into the results.

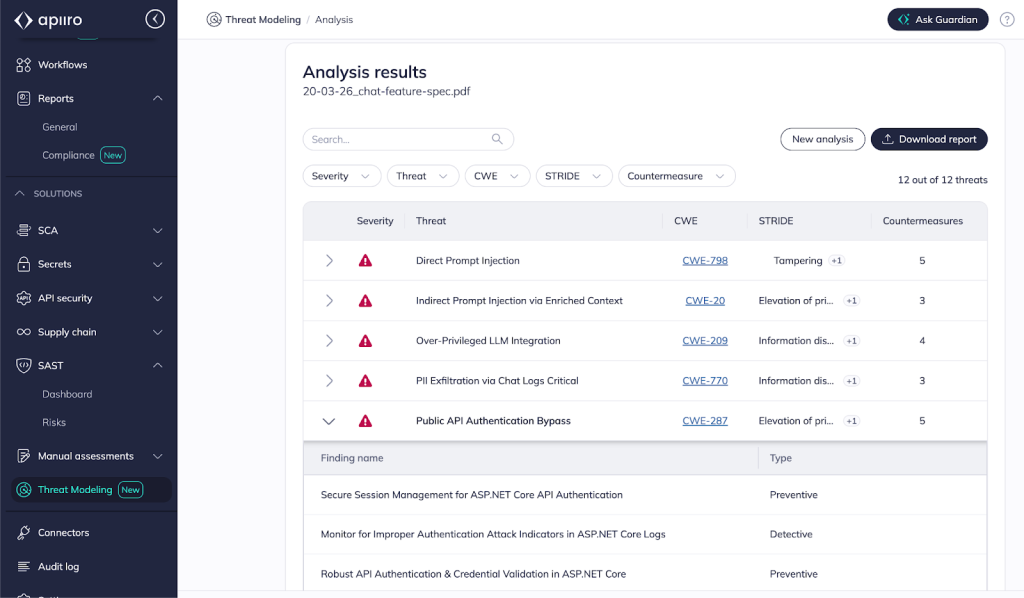

Based on the design doc, The Guardian Agent maps potential threats and suggests concrete countermeasures across all these new external data flows in a single, comprehensive pass.

| Identified Contextual Threat *Example* | Generic Countermeasure (Traditional tools) | Contextual Countermeasure (Guardian Agent) |

|---|---|---|

| Direct Prompt Injection — The assistant is embedded in the internet-facing product, but the EnrichmentService brings internal data — user records, project metadata — into the LLM context for every query. The model is sitting on a cross-system internal data trove. Without adequate boundaries, any user is one prompt away from accessing it. | Validate all user inputs before passing to the LLM. Implement content filtering to detect and block known prompt injection patterns. Apply prompt engineering best practices such as system prompt hardening and role-based instructions. | Separate the data channel from the instruction channel at prompt assembly — user input and enrichment content are untrusted data and must never be interpretable as model instructions. Minimize the LLM’s authority to read-only inference, on relevant data only; Deny list sensitive and redundant endpoints in the internet-exposed RestSharp API using the existing ResponseMiddleware at src/Middleware/ResponseMiddleware.cs:31. |

| Indirect Prompt Injection via Enriched Context — The enrichment layer indexes Confluence pages, GitHub READMEs, and the internal knowledge. A malicious instruction embedded in any indexed document could potentially fire on content retrieval, while the chat prompt itself could look naive, passing validations. | Sanitize and isolate all externally-sourced data before including it in LLM context. Restrict the assistant’s available actions and data access to the minimum required for its function. Apply output validation before rendering or executing LLM responses. | Scope enrichment retrieval to the requesting user’s Okta permissions — not a service account with org-wide read access. Strip markup artifacts, HTML comments, invisible unicode, embedded metadata — from Confluence and GitHub API responses before they enter the prompt. Restrict indexing to approved repositories and document spaces to reduce the write surface attackers can use to plant instructions. Log enrichment retrievals through the existing Microsoft.Extensions.Logging pipeline. |

| Over-Privileged LLM Integration — A single shared credential powers all 108 external call sites, including the enrichment layer’s own data. A credential compromise doesn’t just expose the chat feature; it exposes every project, user, and risk assessment in the platform. | Apply the principle of least privilege to all third-party API integrations. Conduct periodic access reviews to ensure credentials are scoped appropriately. Rotate API keys on a regular schedule and store them in a secrets manager. | Provision the chat feature with a dedicated, minimal credential for LLM inference only — don’t use the wide access ApiCredential at src/Startup.cs:43 as is. Per-service key definitions already exist in infra/api_keys.tf; wire the scoped chat feature’s credential through the HashiCorp Vault integration. |

| PII Exfiltration via Chat Logs — An attacker or insider who reaches the new chat_history collection gains a cross-referenced PII trove — user records, project metadata, risk assessments. It’s a higher-value, lower-effort target than any of the source systems it draws from. | Classify all sensitive data and encrypt PII at rest and in transit. Implement data loss prevention controls across storage and communication channels. Enforce retention policies and ensure compliance with GDPR, CCPA, and other applicable privacy regulations. | Redact PII from enrichment payloads before they reach the conversation logger. Encrypt any fields that must be retained using the existing Cryptography .NET library already in use across 13 call sites. Restrict read access to chat_history — the aggregated collection must not grant broader access than the source MongoDB collections the data originated from. |

The ultimate value of threat modeling lies in its translation from a theoretical exercise into proactive prevention. By leveraging the Apiiro Data Fabric, the Guardian Agent ensures that countermeasures are not abstract security requirements, but have a targeted implementation plan that matches the frameworks, file structures, and security standards already present in the code.

These implementation plans are dynamically written and adjusted to align with your specific tech stack and existing architectural logic. Because the Agent reasons over the real-world Software Graph™, rather than relying on static or “imaginary” architecture diagrams, it ensures that every security instruction is grounded in the actual state of your application. Moreover, it guides the usage of existing controls already in place in the codebase, thus reducing technical debt and the overall code hygiene and complexity.

This level of contextual precision means that whether a human developer or an AI coding agent receives these countermeasures, the process of translating them into actual secure code happens effortlessly and accurately, effectively removing the manual friction that typically follows a design review.

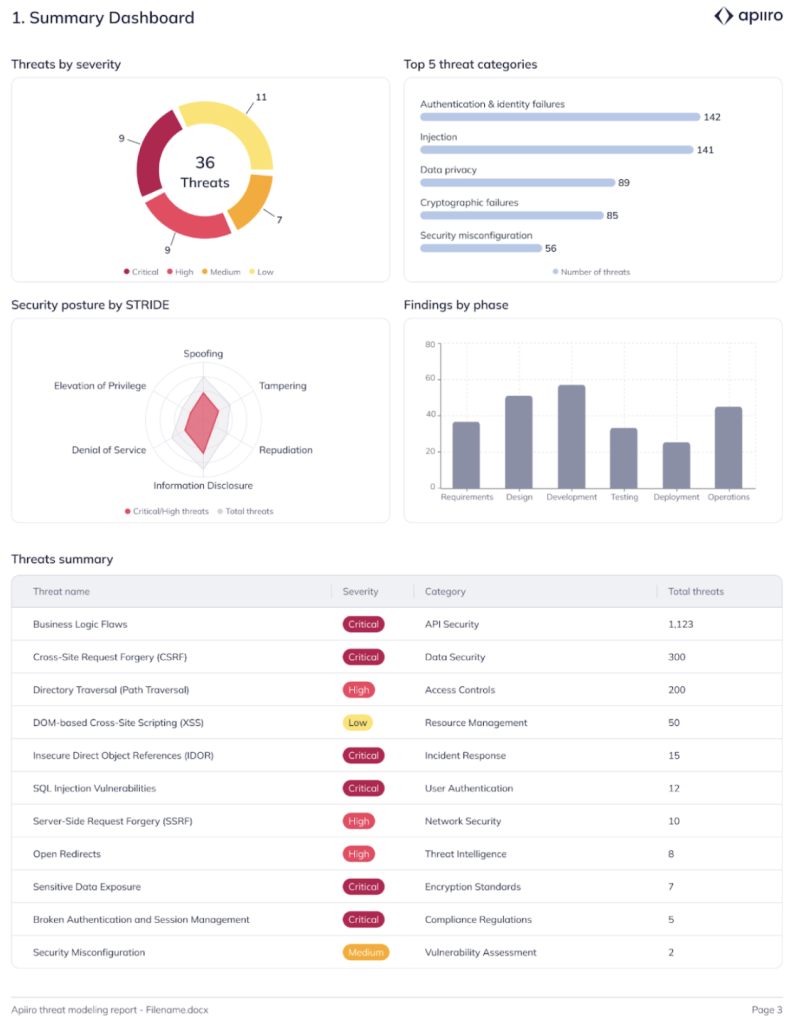

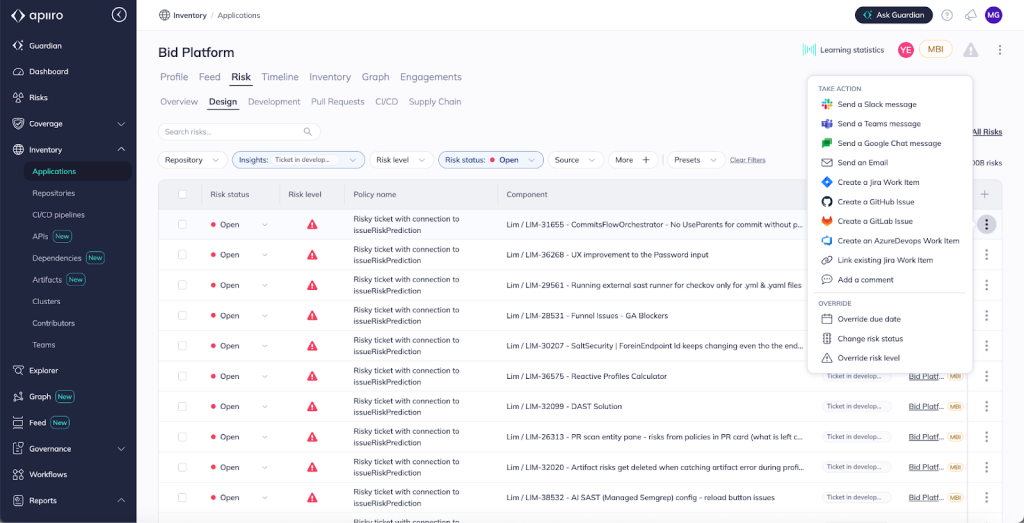

Traditional threat modeling often results in a static document – typically a PDF – that becomes obsolete the moment it is saved. By contrast, threat models generated by the Guardian Agent are living entities integrated directly into the Apiiro Data Fabric. Every identified risk and its associated countermeasure is stored within the platform, allowing you to manage the full risk lifecycle — including ownership, SLA tracking, and formal exception workflows — just as you would with a vulnerability found in code. This data remains accessible through the Apiiro Risk Graph and Explorer, providing a persistent, searchable history of design-stage security decisions. For organizations facing regulatory or internal audits, this information flows directly into Compliance reporting, offering a clear, evidence-based trail that proves security was considered and addressed before implementation began.

One of the most powerful aspects of embedding threat modeling into the Data Fabric is the ability to perform continuous validation. Coming soon, the Guardian Agent will be able to compare the intended secure design (the threat model) with the actual implementation (the code) as part of the PR process, before merge. If:

Guardian Agent will flag this drift.

Guardian AI Threat Modeling is tightly integrated into the daily workflows of both engineering and application security teams.

Developers often view security as a roadblock, a series of “no’s” delivered too late in the process, or vague guidelines that require heavy lifting in order to translate to actual implementation. Guardian Agent changes this by becoming a Developer-Native ally.

Security teams are often stuck in a cycle of manual ticket-pushing. Guardian AI Threat Modeling allows them to transition to high-level strategic governance:

To put our new AI Threat Modeling impact into perspective, let’s look at the ‘before and after’ of a development team tasked with building a new feature: embedding an AI-powered chat assistant in their product, and see how this new Guardian Agent capability helps.

The Traditional Way: The developer creates a Jira ticket and a related design document. They might search for generic integration patterns. Weeks later, during a routine security review, the AppSec team discovers that the chatbot integration handles sensitive user data but lacks proper authentication and logs PII in plain text. The code must be rewritten, delaying the feature release.

By delivering AI powered Threat Modeling as a core capability of the Guardian Agent, we are moving beyond static, manual exercises toward a continuous, code-aware security design:

A private preview of AI Threat Modeling is available today. Schedule a demo here. We are eager to get your feedback and to further improve.