Cookies Notice

This site uses cookies to deliver services and to analyze traffic.

📣 Introducing AI Threat Modeling: Preventing Risks Before Code Exists

Generative AI (GenAI) has taken the world by storm. With a wide array of use cases, quick answers to complex challenges, and seemingly limitless potential, the revolutionary technology has rapidly become deeply embedded in our home and work. While adopting GenAI offers great promise for development teams, there are still a plethora of unanswered questions for security and IT leaders, who haven’t been able to get visibility into how different teams across the organization are using GenAI, what new frameworks are being introduced, and the impact on the risk the organization carries. Questions like ‘How can we ensure our data remains secure?’ and ‘How can we ensure compliance with regulatory requirements? Legal requirements?’ are at the forefront of security teams’ journey into the uncharted territory of GenAI.

With the growing serious security and legal ramifications around privacy, data leakage, and organizational intellectual property, we’re left to wonder… have we opened Pandora’s Box?

There’s no turning back now, but that doesn’t mean that you can’t strategically embrace GenAI to advance software development. Taking a visibility-first approach will empower you to understand how it’s being used across your organization, assess and address new risks in your attack surface, and begin to define and enforce policies across design, development, testing, and production to govern GenAI usage across your organization.

That’s why we are thrilled to extend Apiiro’s application security posture management (ASPM) platform to include automatic detection of GenAI frameworks used across your codebase. This new capability, enabled by our simple API-based integration with source control managers—enables AppSec teams to detect GenAI frameworks, rapidly prioritize and remediate risk introduced by these frameworks, and protect against future threats with policies and developer guardrails.

Our extensive ASPM capabilities—such as risk prioritization, remediation guidance, workflows, policies, and reporting—all extend to GenAI frameworks and their risks, making it easier than ever to manage risk and responsibly reap the benefits of GenAI.

In this post, we’ll explore how Apiiro helps teams harness the power of GenAI by:

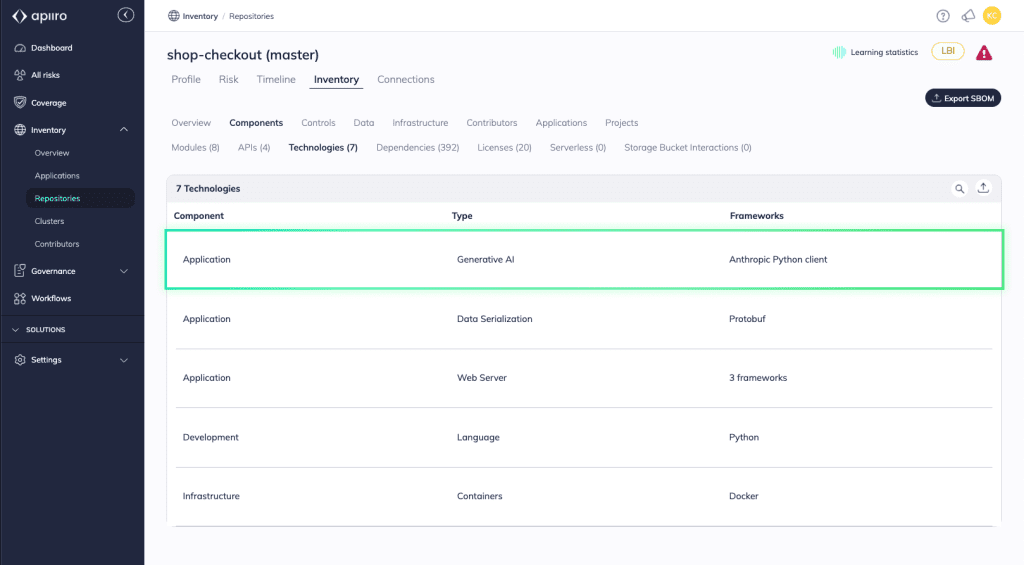

The first step to managing GenAI usage in your development process is to determine if frameworks are being used in your code and where. In Apiiro, you can automatically identify GenAI frameworks, reveal shadow GenAI lurking in your codebase, and continuously map these framework instances across your attack surface. Apiiro currently identifies numerous GenAI frameworks, including VertexAI, OpenAI, LangChain, HuggingFace, GPT4ALL, Anthropic, SageMaker, Azure ML AI, with more to come!

When a GenAI framework is detected, it’s mapped to the relevant application and cataloged as a technology component. This information is captured and maintained in your eXtended software bill of materials (XBOM), a real-time, comprehensive inventory with detailed insights around your components and controls, their interconnections, and associated risks. Your XBOM acts as the catalyst to accelerate prevention and remediation efforts when security risks inevitably arise.

By extensively analyzing your code, unique application architecture, context from tools across your development lifecycle, and runtime signals surrounding the GenAI frameworks in your attack surface, Apiiro is able to go beyond simply detecting these frameworks to prioritize risk to your organization and surface important contextual remediation details around the framework’s usage in your code, like the timeline of change, code owners, dependencies, etc. With this context, you can easily determine when the GenAI framework was added, what Jira ticket it’s tied to, and what change was made, and expedite remediation processes to safeguard against risk.

Apiiro also ties GenAI frameworks to code owners so that teams can flag routine offenders to educate them about secure coding methods, familiarize them with existing frameworks like OWASP’s Top 10 for LLMs and NIST’s AI Risk Management Framework, as well as provide a path for them to improve.

Armed with this insight, you can not only identify where GenAI is present in your code, but you can also evaluate the frameworks being used, understand the implications of these frameworks when it comes to securing your data, and create policies around these frameworks to help control and prevent attack surface sprawl.

Anytime someone in your organization moves beyond enterprise-authorized applications for completing business tasks, there’s the potential danger of exposing data that should be protected, and GenAI usage is no different. One example stems from an experiment with OpenAI’s GPT-2 where researchers were testing the model’s limits and discovered that it memorized and could regurgitate personally identifiable information (PII) such as names, emails, X (formerly Twitter) handles, and phone numbers that were originally included in the training dataset.

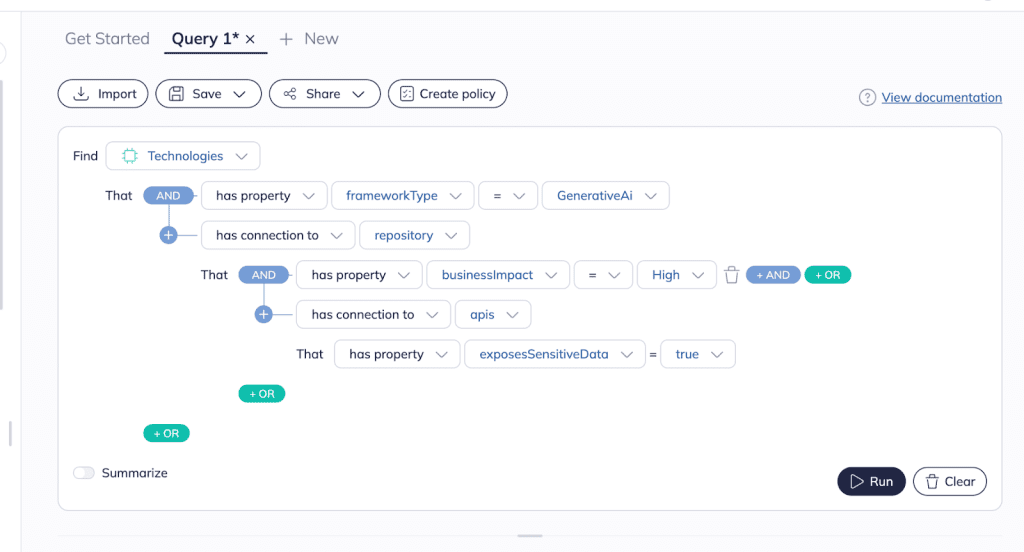

To help prevent this within your software development lifecycle, Apiiro makes it possible to identify risky combinations of GenAI usage and sensitive data.

Using the Risk Graph Explorer, our query-based tool that allows you to ask and answer even the most advanced questions about your applications and software supply chains, you can pinpoint GenAI frameworks in code models that handle sensitive data.

Isolating these models can also help illuminate opportunities for design standards and developer guardrails that can prevent future risk from materializing.

Managing secrets is notoriously challenging, and adding GenAI into the mix only compounds the complexity and risk of these secrets spilling into places they shouldn’t. Take the infamous ChatGPT ‘Grandma Exploit’ for example, where users managed to bypass cybersecurity chatbot rules and obtain Windows 10 Pro keys by asking it to pretend to be a dead grandmother. Yikes. 😬

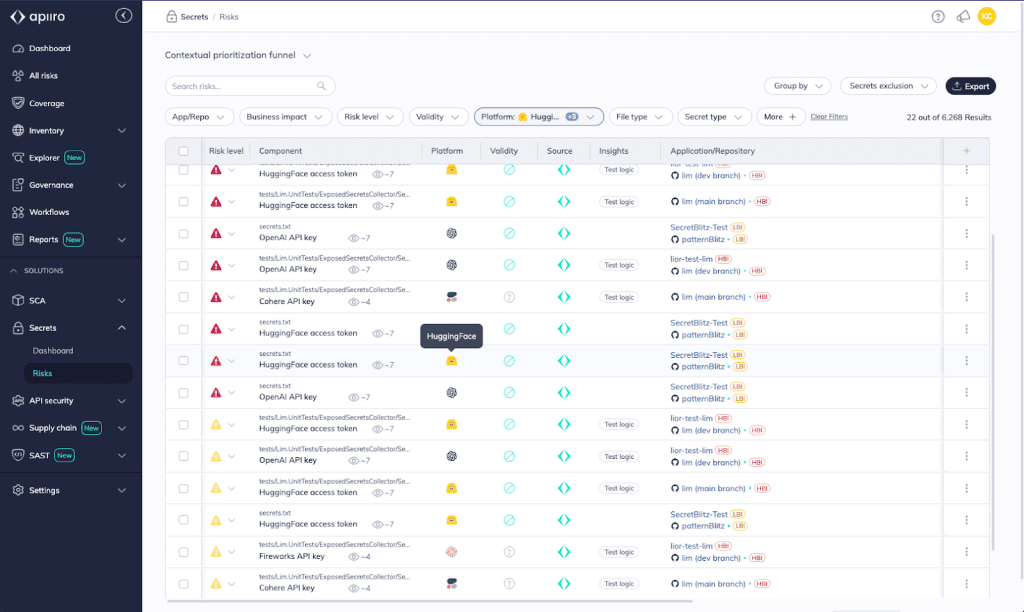

To properly protect your secrets, Apiiro makes it easy for you to detect and secure secrets that are tied to GenAI frameworks.

All risks associated with secrets are already correlated and prioritized in the Apiiro platform, thanks to our native secrets security solution’s advanced detection algorithms and deep code-based analysis. Now, you’ll be able to isolate and remediate secrets risks that are exacerbated with GenAI as a new attack vector.

With vital contextual information like whether a secret is valid or revoked, all of the occurrences of this secret across your codebase, and the history and owner of this secret in code, Apiiro empowers you to understand if and when secrets are tied to GenAI frameworks in code, prioritize and remediate risky secrets, and take action to prevent potential exploitation and misuse.

Innovation doesn’t have to be at the expense of security. In this case, governance is the glue binding them together. Through policies and workflows in Apiiro, you can proactively manage and regulate GenAI usage in your development lifecycle.

To enforce acceptable use, compliance, and data privacy rules related to GenAI that are specific to your organization’s needs, you can create policies in Apiiro around specific GenAI frameworks and the conditions around their usage. Then, with Apiiro’s automated workflows, you can determine the appropriate response—whether that’s blocking a pull request or build or triggering processes like a threat model or design review.

These policies and workflows are the building blocks of your defenses and help ensure you’re able to meet specific legal and regulatory requirements.

Apiiro’s contextual, multidimensional approach to application security delivers the rich insights needed to understand how and when GenAI is used in your organization, the risk and severity it carries, and govern its use to continuously prevent risk. To see if GenAI frameworks are lurking in your attack surface, schedule a demo with our team.